With AI capabilities rapidly increasing, humans appear close to developing AI systems that are better than human experts across all domains. This raises a series of questions about how the world will—and should—respond.

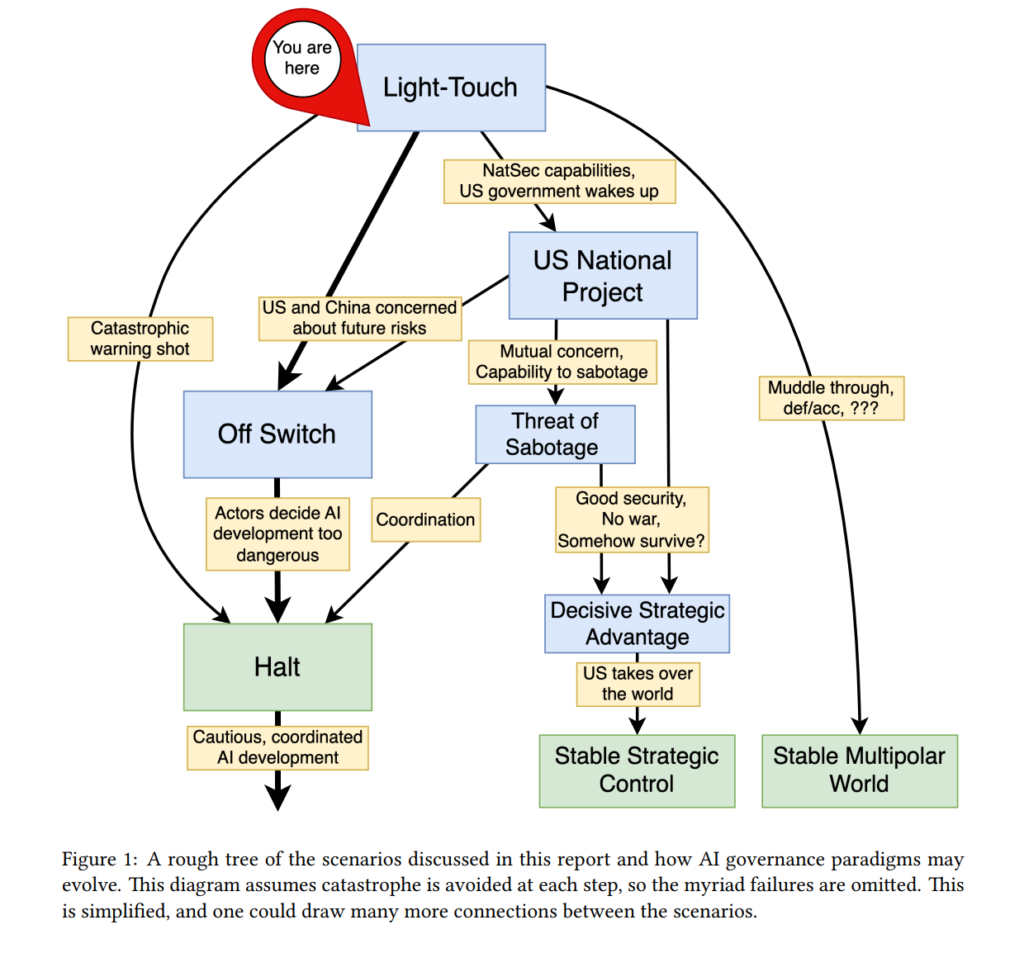

In the research paper AI Governance to Avoid Extinction: The Strategic Landscape and Actionable Research Questions, originally published in May 2025, MIRI’s Technical Governance Team describes four possible geopolitical responses to advanced AI development and explores pros, cons, and risks for each. They conclude that the only trajectory without unacceptably high levels of catastrophic harm is a scenario in which humanity develops the ability to monitor and restrict AI development (an “off switch”) and then likely uses it to put a long-term halt on frontier AI development.

In this post, we informally summarize the four trajectories described in the paper, exploring how each trajectory would influence and respond to existing AI dynamics and risks. For a more technical overview, or to see governance research questions relevant to each trajectory, we recommend the executive summary or the full report.

The four trajectories described are:

- Off Switch and Halt: International collaboration to develop the ability to monitor and restrict AI at some point in the future (an “off switch”). This could eventually lead to a moratorium on frontier AI development (a “halt”).

- US National Project: The US races to build superintelligent systems and control global AI development.

- Light-Touch: The government takes a light-touch approach to regulation, mostly leaving AI companies to act as they see fit.

- Threat of Sabotage: Countries don’t want others to gain a strategic advantage or create dangerous technology, so they sabotage or threaten rival AI development.

Before examining each scenario in more depth, it’s helpful to briefly cover the core considerations used to evaluate them.

Core considerations

AI systems are becoming increasingly capable and autonomous. Competitive pressures drive the development of systems that can operate agentically, with little human oversight, to accomplish long-term goals. At the current pace, labs are soon likely to develop systems that exceed the best humans at any task, despite little to no understanding of how these systems function and no robust means to steer and control their behavior. The default outcome is likely catastrophe and human extinction. For a governance system to be effective, it must avoid catastrophic outcomes such as:

- Loss of control: AI pursues goals that aren’t aligned with human interests, likely resulting in human extinction.

- Misuse: Malicious or reckless actors use AI in dangerous ways (ex. biological weapons, weapons of mass destruction).

- War: An AI-related struggle between two great powers causes catastrophic harm.

- Authoritarianism/lock-in: Society is locked into harmful conditions or values.

Below, we examine each of the four trajectories discussed in the report and discuss how well they guard against the catastrophic outcomes above. We cover the Off Switch and Halt scenario in the most detail, since we believe it is the most viable path to avoid catastrophe.

Off Switch and Halt

The Off Switch and Halt scenario describes a trajectory where humanity decides to create the ability to shut down AI development (an “off switch”). This may eventually result in a halt, if the off switch is used.

Given that the scientific understanding of AI systems is still in its infancy, a global halt to AI development may be necessary to ensure humanity has time to gain sufficient understanding of these systems before they are intentionally or accidentally released.

Off Switch

An off switch refers to building the necessary technical, legal, and institutional infrastructure to make it possible for humanity to shut down unsafe AI systems and AI projects. It will also make it possible to verify that there is no dangerous AI development globally, if humanity decides to halt.

Since a halt may be necessary to avoid catastrophe-level risks, including human extinction, there’s a strong case to begin implementing the off switch, since building the required infrastructure could take years. The off switch could be pursued without requiring global consensus on the need for a halt, as it seems uncontroversial that humanity should be able to halt a potentially catastrophic technology if it wanted to.

An off switch may appeal both to those who primarily have extinction-level alignment concerns and to those who have a broader range of concerns. For example, being able to monitor, evaluate, and, if necessary, enforce restrictions on frontier AI development would also reduce risks from terrorism, geopolitical destabilization, and other societal disruption.

Halt

There are several ways an off switch might be triggered, once it is in place. For example, an AI disaster that the US or global community recognizes as a “warning shot” indicating imminent catastrophic risk could result in broad consensus to enact a short-term pause with the intention of acute risk mitigation. The short-term pause could either be domestic (if the US were confident other powers’ technology didn’t yet pose a threat, and the domestic pause was used as leverage to later enact a global pause) or international (likely both safer and more politically viable).

Humanity might also decide not to wait for a “warning shot” before implementing a pause, since there is no guarantee of getting such a warning before a calamitous event ensues. These considerations might motivate the international community to trigger the off switch sooner.

A short-term pause would also allow time to put appropriate measures in place for a stable, long-term moratorium. During a long-term moratorium, many AI activities would likely still be allowed. For example, narrow AIs could help solve biological and medical challenges.

A long-term moratorium would provide time for humanity to gain sufficient understanding of AI systems to confidently solve safety issues. It would also allow time to adapt to a world with advanced AI, a world that might face widespread labor disruption and threats from new weapons technologies.

A stable long-term moratorium would need to cover all forms of dangerous AI activities and be lasting, global, and enforceable.

Enforcement

Any global pause on AI development would require international agreements. For such pauses to be effective and enforceable, agreements would need to clearly specify which activities are permitted and prohibited, establish verification mechanisms to ensure compliance, and determine how to handle violations.

Several verification mechanisms could allow the terms of an international agreement to be effectively governed, including closely monitoring and restricting the use of the advanced computer chips (“compute”) required to make AI, restricting the publication of algorithmic improvements that could allow superintelligence to be developed with dramatically less compute, and steering key researchers towards safer activities.

Outlook

The Off Switch and Halt trajectory is the best path to mitigate loss of control risks from AI. It would prevent labs from building superintelligence without knowing how to steer it. The monitoring infrastructure necessary for an off switch and halt would also address misuse by bad actors. If the off switch was built via international cooperation, it would lower the risk of war and help prevent authoritarian lock-in.

US National Project

The US National Project describes a scenario in which the US decides to advance AI capabilities with the aim of gaining a decisive strategic advantage over the rest of the world. In this scenario, the government would exercise some domestic control over AI development (possibly via a centralized project akin to the Manhattan Project) and take efforts to secure the technology from crossing borders (through measures like export controls on chips and securing algorithmic secrets). A key part of the US National Project would be the goal of reaching superintelligence before any other country.

Enforcement

A US National Project would need to improve security at domestic labs to ensure that algorithmic secrets and model weights would not cross national borders. It would also need an accurate way of measuring the US lead, which presents challenges given that model evaluations (even domestically) are not very reliable. For example, models may purposely underperform on evaluations, and capabilities may improve faster than benchmarks can be created.

Outlook

The US National Project is too dangerous to constitute a viable response. It carries an extremely high risk of loss of control, dependent as it is on racing to build superintelligent systems before understanding how to steer them. It also carries significant risk of war or sabotage, as other powers will likely want to deter the US from reaching its goals, and of authoritarian lock-in, as countries seek unilateral control over AI development.

Advanced AI systems are fundamentally different from traditional technology in that they will likely have their own goals. This, combined with the current state of alignment research, makes it likely that leveraging advanced AI would not actually result in a decisive strategic advantage, even if nothing else were to go wrong. In other words, even in a world where the US successfully beat all other countries in the race to superintelligence, and was able to use that to exert geopolitical power, any such result would not be stable. The advanced models themselves would immediately or eventually end up with the power.

Light-Touch

Light-Touch describes a trajectory similar to the current state of affairs (as of April 2026) in which governments aren’t heavily involved in AI development, mostly leaving development and key decisions to the labs.

It may end up somewhat similar to the US National Project, in that governments get involved primarily to prevent proliferation of important research to competing powers, but with the labs in charge instead of government officials.

Since the Light-Touch scenario is likely to lead to the open use of very powerful models, defensive acceleration—developing advanced military weapons with the goal of defending against adversaries that are using powerful AI for harmful purposes—may play a key role.

Enforcement

While the scenario is by definition light-touch, as the situation evolves and AIs become ever more militarily and economically important, governments would likely get more involved, especially since the open release of advanced AI models poses grave threats to national and international security. For example, governments may ensure visibility into AI companies, attempt to stop companies from dangerous competition with each other, and implement stronger security to prevent theft by state actors.

Outlook

The Light-Touch scenario may carry a slightly lower risk of war than the US National Project, in that the race would be somewhat quieter. It may also carry a slightly lower risk of authoritarian lock-in, in that development would be more decentralized. However, it is still much too dangerous to constitute a viable path forward.

The open release of highly capable AI models would dramatically increase the risk of misuse by bad actors, who may use them for biological weapons development and terrorism. Defensive acceleration is likely not robust enough to effectively defend against such risks, as AI-assisted technologies like bioweapons are easier to make than to defend against. Additionally, decentralized, unregulated development could still magnify existing societal power imbalances such as extreme wealth inequality. War between competing powers is also still a risk, especially if the US lead is clear or suspected.

Most importantly, the light-touch scenario does nothing to address the loss of control risks that are likely the default outcome from current methods of frontier AI development.

Threat of Sabotage

The Threat of Sabotage scenario describes a trajectory where countries either threaten to sabotage each other’s advanced AI projects or actively do so, resulting in a world where capabilities are kept low enough to pose limited danger. Countries may threaten sabotage if they are concerned about one power gaining a strategic advantage or if they are concerned about loss of control risks from advanced AI capabilities.

Enforcement

The Threat of Sabotage scenario would primarily work via deterrence, in that the consequences would be large enough to prevent countries from pursuing advanced AI projects. It’s possible that countries would also engage in direct sabotage as retaliation for norm deviation, via cyberattacks, model poisoning, and, in more extreme cases, destruction of AI data centers or AI chip supply chains. International agreements may play a role as well—for example, to define AI capabilities of concern and leave open the possibility of future monitoring agreements in order to forestall clandestine AI development projects.

Outlook

For a Threat of Sabotage scenario to govern AI risk, nations would need to monitor other powers’ progress and be able to sabotage development if projects persisted beyond permitted levels. This could prove difficult, requiring countries to identify and respond to threatening projects before they progressed into the danger zone. It may be possible to increase visibility into AI projects via agreements granting monitoring access, and to slow down the advancement of potentially threatening projects through agreements that constrain capabilities infrastructure. These agreements would follow historical precedents like the nuclear START treaties and the 1922 Washington Naval Treaty.

That said, a deterrence regime, along with acts of sabotage, could raise geopolitical tensions and result in war. Additionally, even if countries stopped racing to gain a strategic advantage, they could still build advanced AI systems for economic reasons. Some of these systems may be capable enough to pose loss of control risks.

Takeaways and recommended policy actions

The only sufficient response to the current situation, in which smarter-than-human AI is imminent despite an immature scientific field, is a global halt. The current path, along with the most likely variations, pose unacceptably large risks, including terrorism, great power war, lock-in of bad values, and loss of control due to misaligned systems.

If a halt is not politically feasible at this time, then we should start with an “off switch”, and build the technical, legal, and institutional infrastructure we would need in order to halt. Then, if there is political will, we will be able to pause AI development until the field is advanced enough to build powerful systems safely.