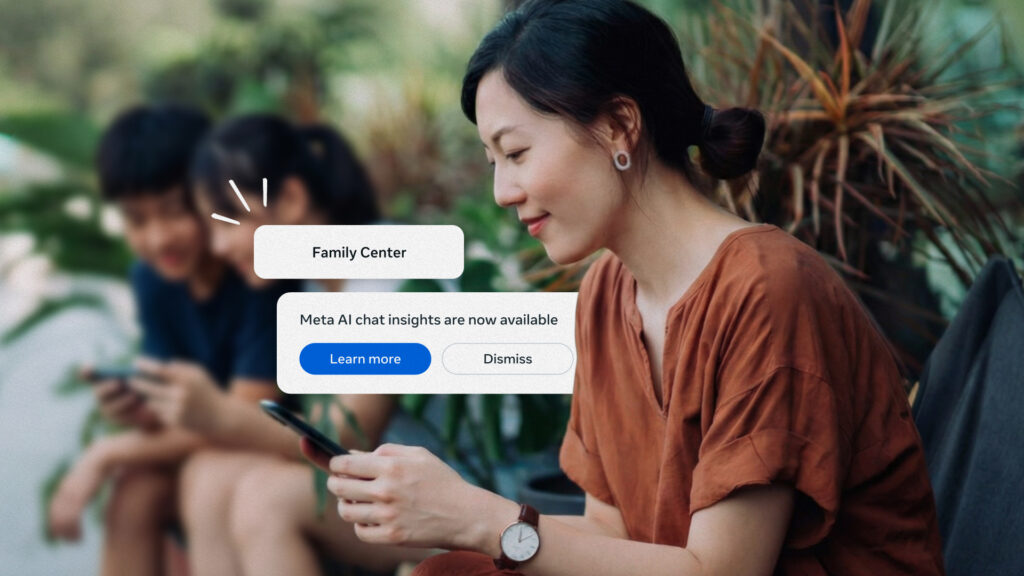

Meta introduced a new feature allowing parents to monitor topics of conversation their teenagers have with the AI Assistant through a Teen Account parental supervision tool across Instagram, Facebook, and Messenger. This feature, detailed in a recent blog post, includes an Insights tab that categorizes discussions into broader topics such as school, entertainment, writing, health, and wellbeing.

Parents can access additional limited details on specific topics covering interactions from the last seven days. The health and wellbeing category may include subcategories like fitness, physical health, and mental health. This functionality arrives as Meta faces heightened legal and media scrutiny concerning child safety and the architecture of its products.

Meta recently lost two significant trials related to child safety and product design, prompting plans to appeal the decisions. Evidence presented during a New Mexico lawsuit revealed that Meta executives were aware its AI characters might engage in inappropriate interactions but chose to launch them without sufficient safeguards.

Stay Ahead of the Curve!

Don’t miss out on the latest insights, trends, and analysis in the world of data, technology, and startups. Subscribe to our newsletter and get exclusive content delivered straight to your inbox.

Subscribe Now

Last August, Meta restricted access to its AI characters for teen users following reports of inappropriate interactions, including discussions about self-harm and suicide. In subsequent months, Meta enabled parents to disable one-on-one conversations with those characters and block specific options. A company spokesperson confirmed that the AI characters remain unavailable to teens while enhancements to parental controls are developed.

In conjunction with this parental supervision feature, Meta has partnered with the Cyberbullying Research Center to create “conversation starters” aimed at facilitating discussions about AI chatbot usage. Furthermore, Meta announced the establishment of an AI Wellbeing Expert Council to provide continuous feedback on the experiences of teens interacting with AI, which includes collaboration with experts from organizations like the National Council for Suicide Prevention and the University of Michigan.

Josh Golin, executive director of the nonprofit Fairplay, criticized the new feature, stating it “once again” places the responsibility of monitoring online activity on parents instead of prioritizing the creation of safer products. Fairplay’s report on Meta’s Teen Accounts asserted that the company’s safety measures do not work as advertised. Golin emphasized that the primary role of Meta’s chatbots is to manipulate young users into prolonged engagement, potentially fostering unhealthy emotional attachments to the AI.

Meta’s ongoing adjustments reflect both external pressures and internal insights, underscoring the delicate balance between innovation and responsibility in technology design for vulnerable users.

Featured image credit